Trossen Robotics WidowX 250

In this tutorial, we will show you how to integrate and remotely control the Trossen Robotics' WidowX 250 robotic arm.

This tutorial shows how to integrate the WidowX 250 6 DOF version with Leo

Rover. If you have the 5 DOF version, the integration process stays mostly the

same. The difference is the robot model name - for 5 DOF version it is wx250

instead of wx250s. So make sure to adjust all of the steps accordingly.

With 6 degrees of freedom and reach of 650mm WidowX 250 robotic arm is the biggest, and the most capable, robot arm we have ever tried to stick onto a Leo Rover.

To make integration easier we highly recommend to make use of Powerbox addon

What to expect?

After finishing the tutorial you'll be able to control the arm with a joystick and Python API, visualize the arms model and plan its moving trajectory with ROS MoveIt.

Prerequisites

Mechanical integration

The mounting of the arm is particularly easy. If you have bought the arm with the modified support plate designed for our robot, you can use screws and nuts to connect the arm to the rover's mounting plate.

If you have the original support plate, you can get the model for 3D printing here:

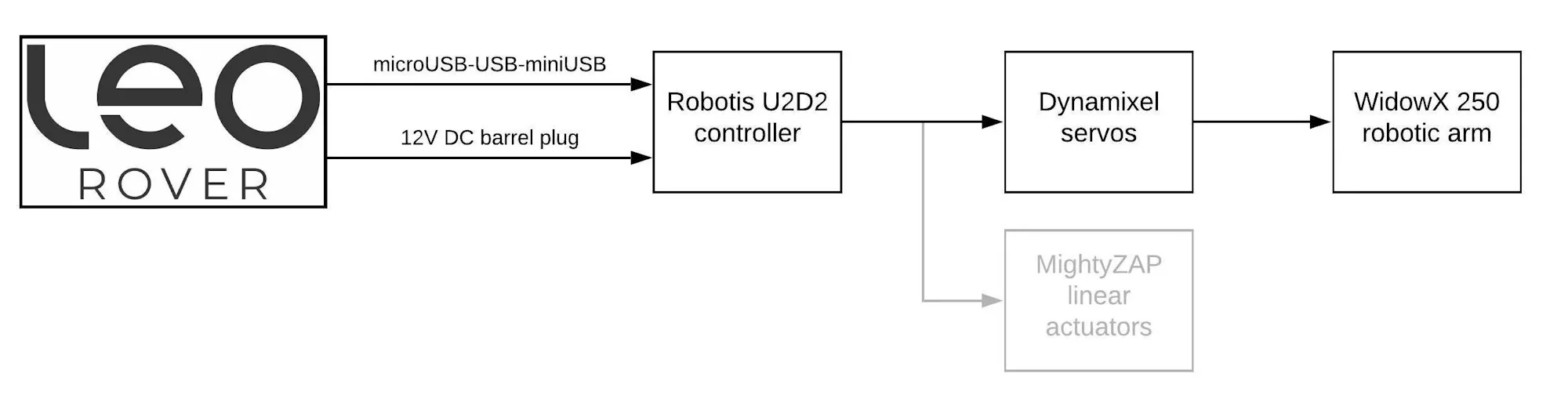

Wiring and electronics connection

Stick the Dynamixel cable coming out of the base of the arm into the power distribution board.

Connect U2D2 and the power distribution board with the short Dynamixel cable.

Connect the U2D2 to the rover using an USB cable

The last step is to stick connect the barrel jack cable to the battery power supply (Powerbox addon might be useful here) and plug into the other end into power distribution board.

Software integration

Integrating the arm with the system

There is a couple of files that will need to be modified on the Rover's system.

We will show you how to do this using nano - a command line text editor, but

if you have your own preferable method of editing files, feel free to use it.

There is a way to install all of the software using a script prepared by a manufacturer. This script streamlines the installation process, however a lot of non-essential packages are installed with it and if you want to minimize the amount of disk space required we recommend following the steps below.

Make sure that your rover is connected to internet. If not, follow the guide below.

Update the system to ensure that the newest versions of the packages and repositories are used:

sudo apt-get update && sudo apt -y upgrade

Make sure that your ROS is sourced:

source /opt/ros/<your_ros_distro>/setup.bash

Replace <your_ros_distro> with installed ROS distribution (e.g. noetic).

Install the essential packages:

sudo apt-get install -yq curl git

sudo apt-get install -yq python3-pip

python3 -m pip install modern_robotics

sudo apt-get install -yq python3-rosdep python3-rosinstall python3-rosinstall-generator python3-wstool build-essential

Create a ROS workspace and clone the repositories:

mkdir -p /home/pi/interbotix_ws/src

cd /home/pi/interbotix_ws/src

git clone -b ${ROS_DISTRO} https://github.com/Interbotix/interbotix_ros_core.git

git clone -b ${ROS_DISTRO} https://github.com/Interbotix/interbotix_ros_manipulators.git

git clone -b ${ROS_DISTRO} https://github.com/Interbotix/interbotix_ros_toolboxes.git

By default Interbotix packages contain a CATKIN_IGNORE file which prevents them from being built. To build the packages for WidowX250, remove the following CATKIN_IGNORE files:

rm interbotix_ros_core/interbotix_ros_xseries/CATKIN_IGNORE interbotix_ros_manipulators/interbotix_ros_xsarms/CATKIN_IGNORE interbotix_ros_toolboxes/interbotix_rpi_toolbox/CATKIN_IGNORE interbotix_ros_toolboxes/interbotix_xs_toolbox/CATKIN_IGNORE interbotix_ros_toolboxes/interbotix_common_toolbox/interbotix_moveit_interface/CATKIN_IGNORE

We need to make sure the device is available at a fixed path on rover's system.

To do this, you can copy a provided file that will add a rule to udev:

cd interbotix_ros_core/interbotix_ros_xseries/interbotix_xs_sdk

sudo cp 99-interbotix-udev.rules /etc/udev/rules.d/

sudo udevadm control --reload-rules && sudo udevadm trigger

Install ROS dependencies using rosdep:

sudo rosdep init

rosdep update

cd /home/pi/interbotix_ws

rosdep install --from-paths src --ignore-src -r -y

After the dependencies are installed, build the workspace:

catkin build -j 1

Once the packages have been built, you can edit the environmental setup file to

point to your result space. Open the file in nano:

nano /etc/ros/setup.bash

Comment out the first line by adding # sign and add the source command for

your workspace. The first 2 lines should look essentially like this:

#source /opt/ros/noetic/setup.bash

source /home/pi/interbotix_ws/devel/setup.bash

Now, to add the configured arm's driver to the rover's launch file, open the

robot.launch file:

nano /etc/ros/robot.launch

and paste these lines somewhere between the <launch> tags:

<include file="$(find interbotix_xsarm_control)/launch/xsarm_control.launch">

<arg name="robot_model" value="wx250s"/>

<arg name="use_world_frame" value="false"/>

<arg name="use_rviz" value="false"/>

</include>

This will start the arm driver node with the specified parameters on rover startup.

You can learn more about the driver's parameters and functionalities at the interbotix_xsarm_control documentation.

You can also edit the robot's URDF file to connect the arm's base link to the

rover's model. To do this, open the robot.urdf.xacro file:

nano /etc/ros/urdf/robot.urdf.xacro

and paste these lines somewhere between the <robot> tags:

<link name="wx250s/base_link"/>

<joint name="arm_joint" type="fixed">

<origin xyz="0.043 0 -0.001"/>

<parent link="base_link"/>

<child link="wx250s/base_link"/>

</joint>

Make sure that the origin coordinates are set correctly to match the arm's position on the rover.

To learn more about what the files under /etc/ros are used for and how do they correlate with each other, visit the Adding additional functionality to the rover section on ROS Development guide:

That's it! On the next boot, the arm driver node will start together with all the other nodes. You can manually restart the running nodes by typing:

sudo systemctl restart leo

If power to the arm is cut (e.g. when the rover is turned off) all of the arm's motors will be switched off. This means that the arm will collapse uncontrollably.

To prevent it hold the arm manually or place it in a secure position before turning off the rover.

Examples

Controlling the arm

Now that you have the driver running, you should see the new ROS topics and

services under the /wx250s namespace. For a full description of the ROS API,

visit the

manufacturer documentation.

You can test some of the features with the rostopic and rosservice

command-line tools:

Publish position command to the elbow joint:

rostopic pub /wx250s/commands/joint_single interbotix_xs_msgs/JointSingleCommand "name: 'elbow'

cmd: -0.5"

Be careful while commanding individual arm joints. The driver doesn't prevent self-collisions in this mode of operation.

Be ready to stop publishing the movement command if the arm moves in an unexpected way.

Turn off the torque on all joints:

rosservice call /wx250s/torque_enable "{cmd_type: 'group', name: 'all', enable: false}"

Turn the torque back on:

rosservice call /wx250s/torque_enable "{cmd_type: 'group', name: 'all', enable: true}"

All of the examples below require Interbotix ROS packages to be installed on your computer. To do that you can either repeat the steps from the previous section on your pc or use a script provided by the manufacturer. Here the script is recommended.

Make sure that ROS in installed on your computer:

and properly configured to communicate with the nodes running on the rover. For this, you can visit Connecting other computer to ROS network section of the ROS Development tutorial:

After the installation source the devel space to make the new packages visible

in your shell environment:

source ~/interbotix_ws/devel/setup.bash

You will have to do this at every terminal session you want to use the packages

on, so it is convenient to add this line to the ~/.bashrc file.

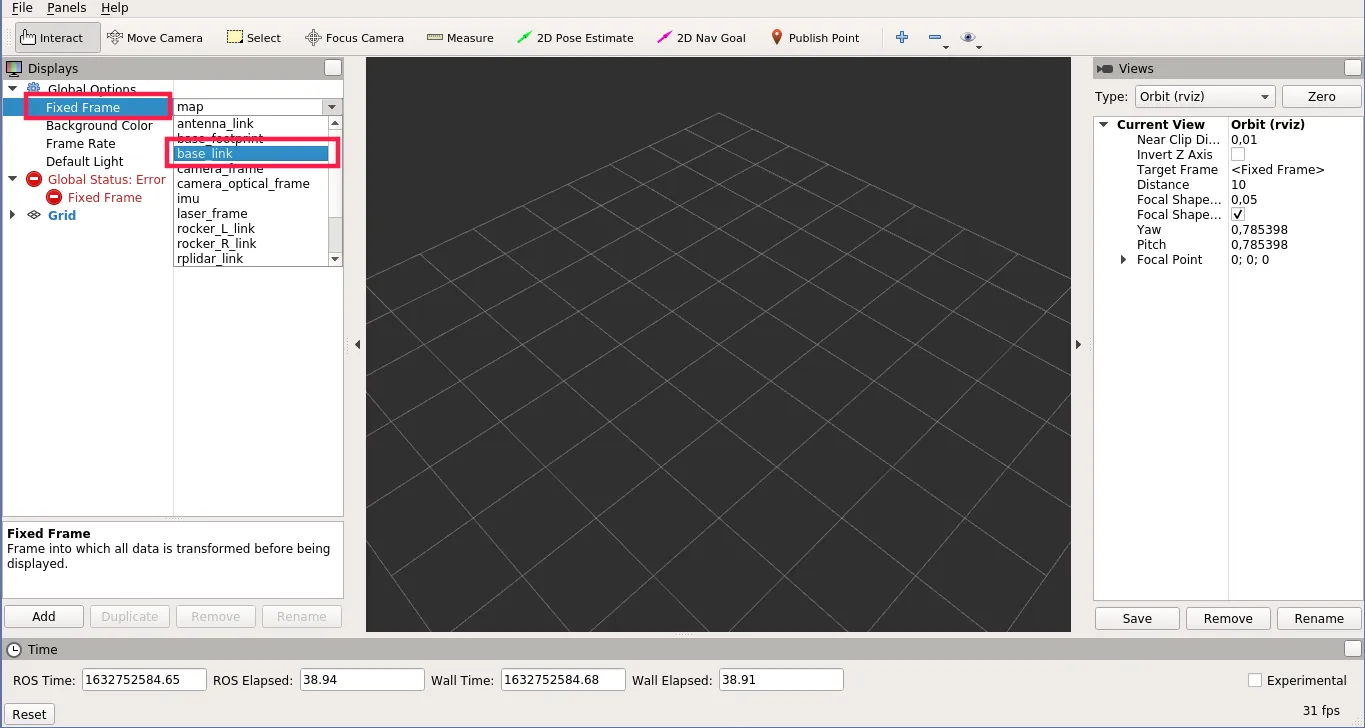

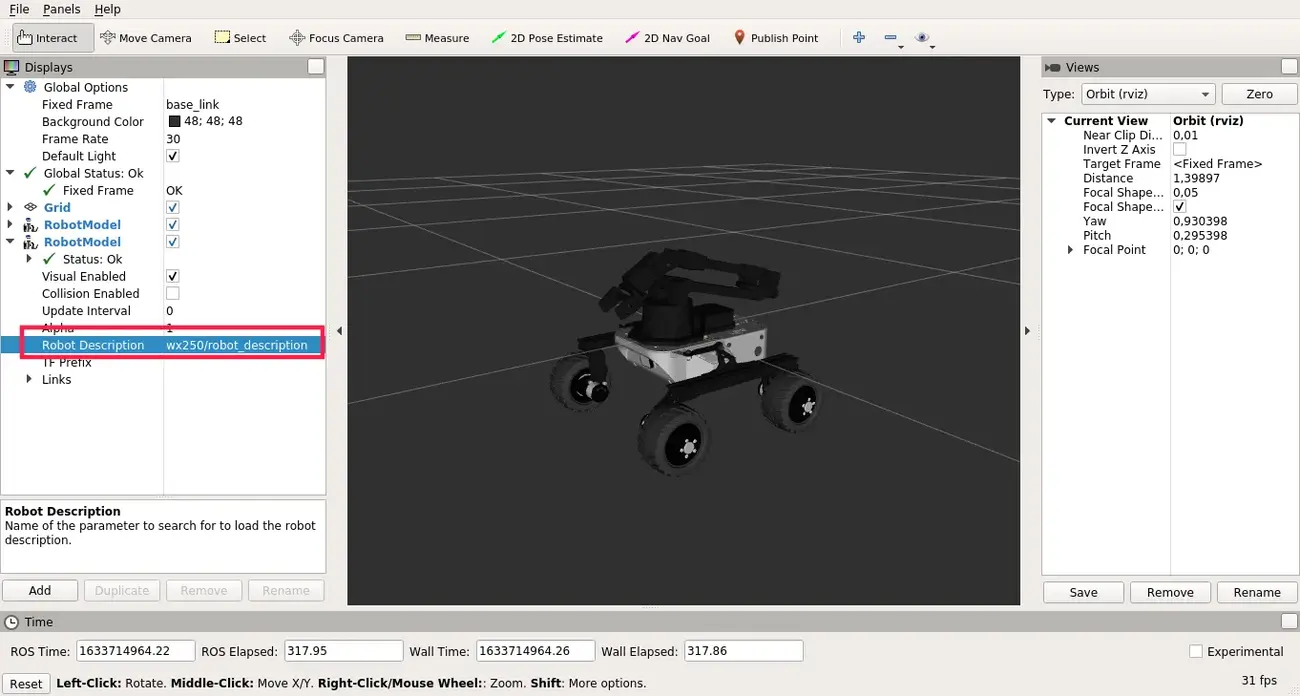

Visualizing the model

- Open RViz by typing

rvizin the terminal. - Choose

base_linkas the Fixed Frame

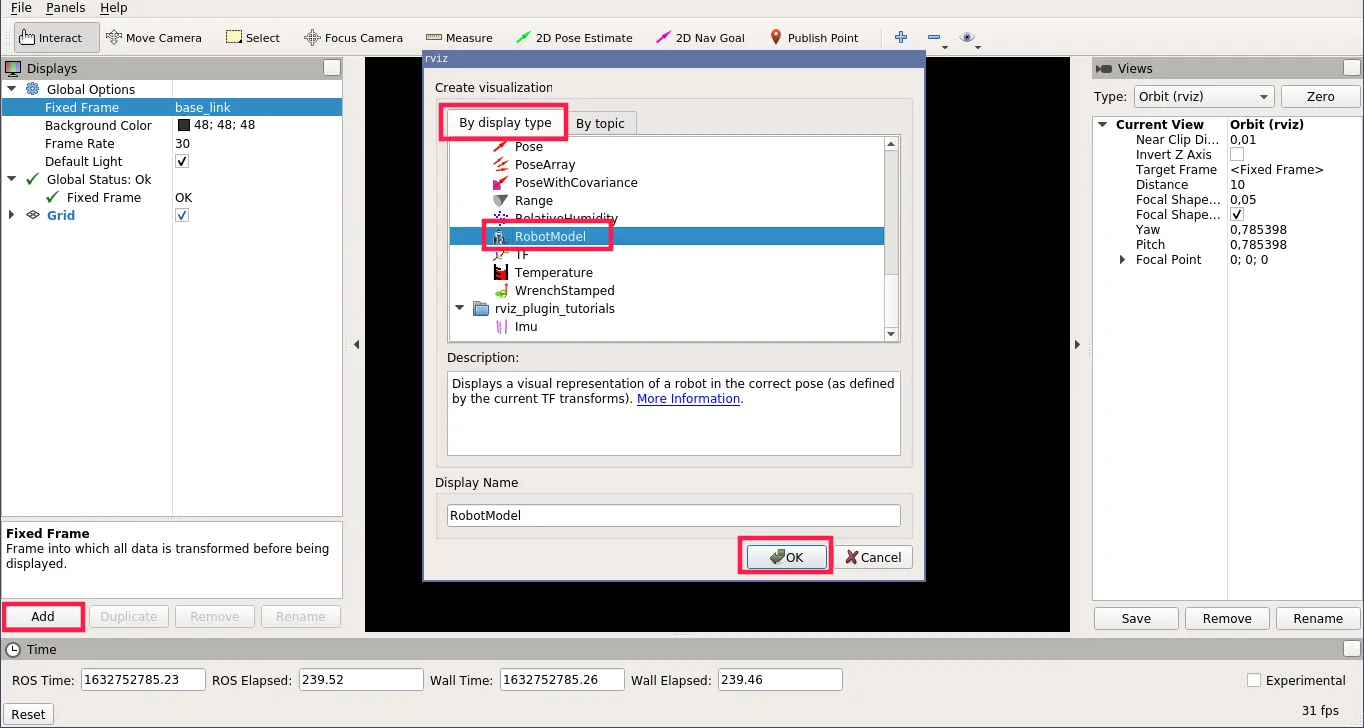

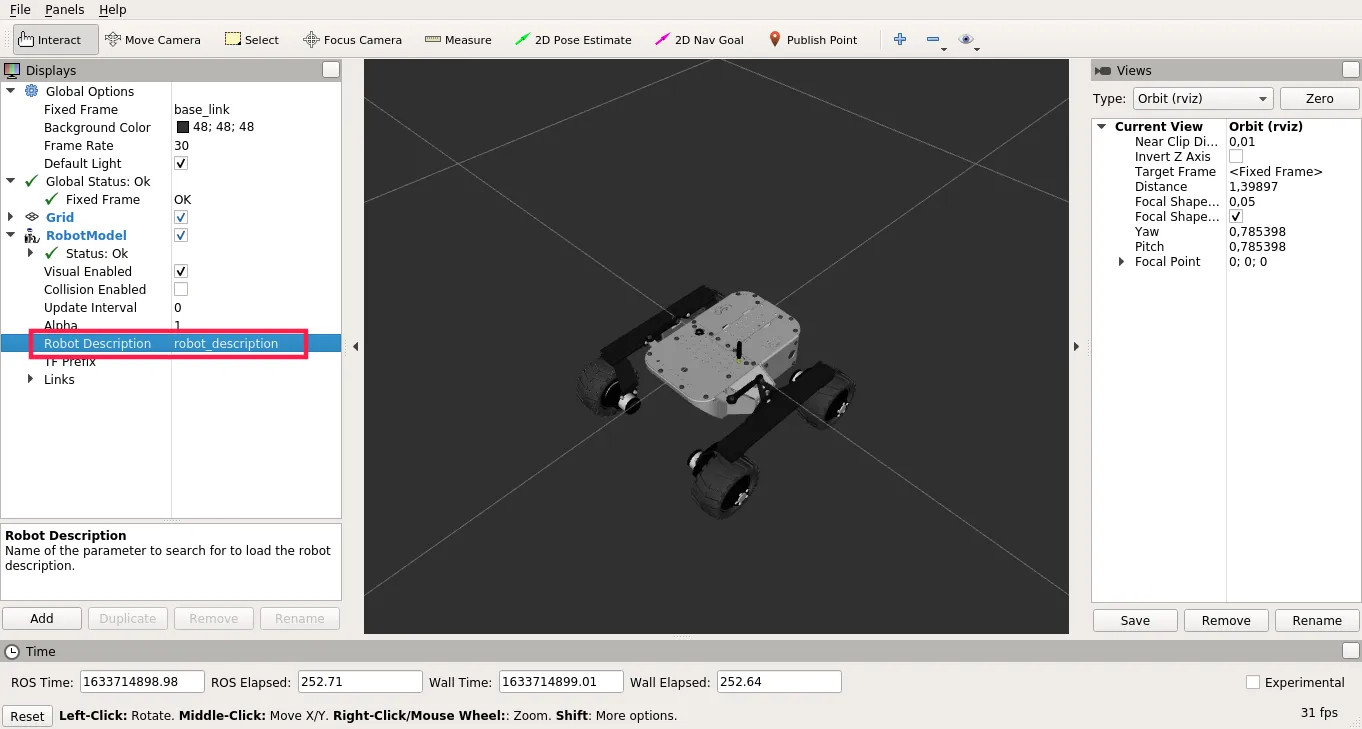

- On the Displays panel, click on Add and choose RobotModel.

- For the Robot Description parameter, choose

robot_description.

- Add another RobotModel display, but for the Robot Description

parameter choose

wx250s/robot_description.

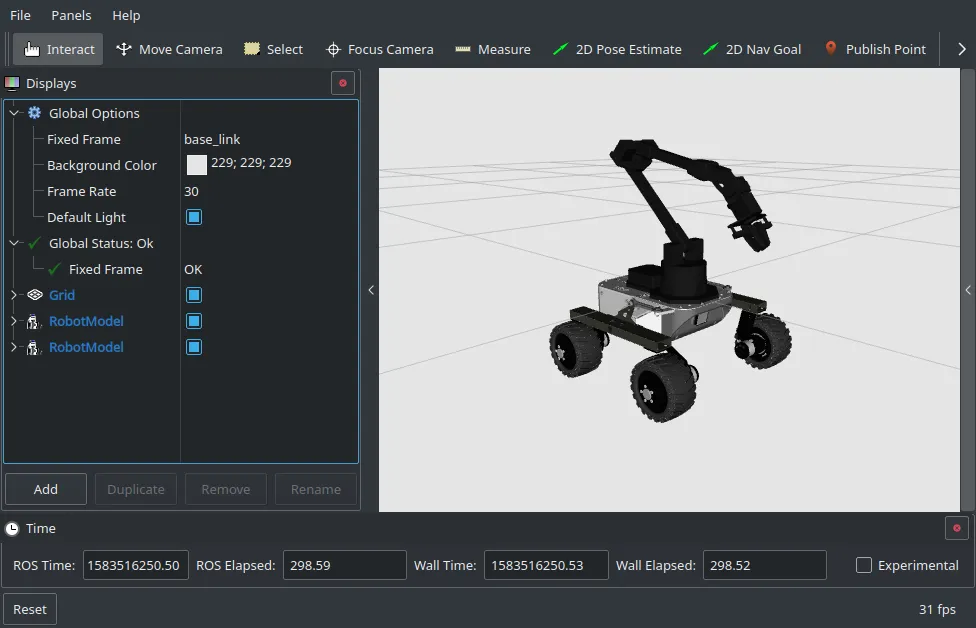

The effect should look similar to this:

If the arm is not properly aligned with the rover you can go back to

/etc/ros/urdf/robot.urdf.xacro file and adjust the origin coordinates of the

arm_joint.

Using gamepad with joystick to control the arm

The interbotix_xsarm_joy package provides the capability to control the

movement of the arm (utilizing inverse kinematics) with a PS3, PS4 or an Xbox

gamepad.

To launch the joy controller on your pc (using a PS4 gamepad), type:

roslaunch interbotix_xsarm_joy xsarm_joy.launch robot_model:=wx250s launch_driver:=false controller:=ps4

If you are using a different controller, change the controller parameter to

ps3 or xbox360. xbox360 setting will also work with the Xbox One

controller.

You can find the button mappings on the joystick_control docs page.

Planning the trajectory with MoveIt

MoveIt motion planning framework will allow us to plan and execute a

collision-free trajectory to the destination pose of the end-effector. In order

to use it the arm driver node must be using ros_control, therefore the driver

launch file definition must be changed accordingly. To do this open robot launch

file on the rover:

nano /etc/ros/robot.launch

and change the lines added during installation (between the <launch> tags):

<include file="$(find interbotix_xsarm_control)/launch/xsarm_control.launch">

<arg name="robot_model" value="wx250s"/>

<arg name="use_world_frame" value="false"/>

<arg name="use_rviz" value="false"/>

</include>

to:

<include file="$(find interbotix_xsarm_moveit)/launch/xsarm_moveit.launch">

<arg name="robot_model" value="wx250s"/>

<arg name="use_world_frame" value="false"/>

<arg name="use_rviz" value="false"/>

<arg name="dof" value="6"/>

<arg name="use_actual" value="true"/>

</include>

If you are using a 5 DOF version of the arm, change the dof parameter to 5.

This will make the driver launch alongside with MoveIt and ros_control on

startup. To launch the driver either restart the rover or type:

sudo systemctl restart leo

If you want to try launching MoveIt driver from cli on the rover, you can use the command below:

roslaunch interbotix_xsarm_moveit xsarm_moveit.launch robot_model:=wx250s use_world_frame:=false use_moveit_rviz:=false dof:=6 use_actual:=true

Just remember to remove (or comment) the arm driver include from autostart (in

/etc/ros/robot.launch) and restart leo service

(sudo systemctl restart leo), as without doing so two instances of the driver

will be launched which may lead to issues.

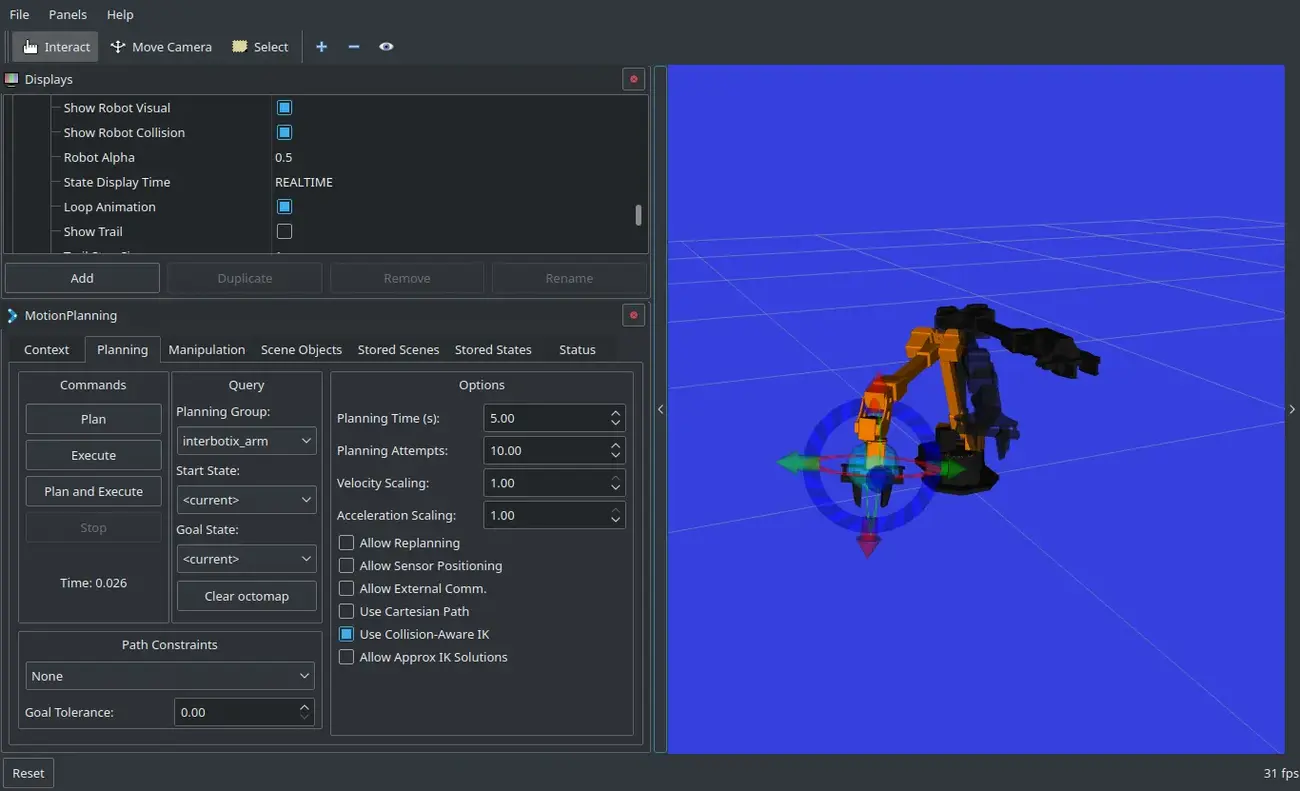

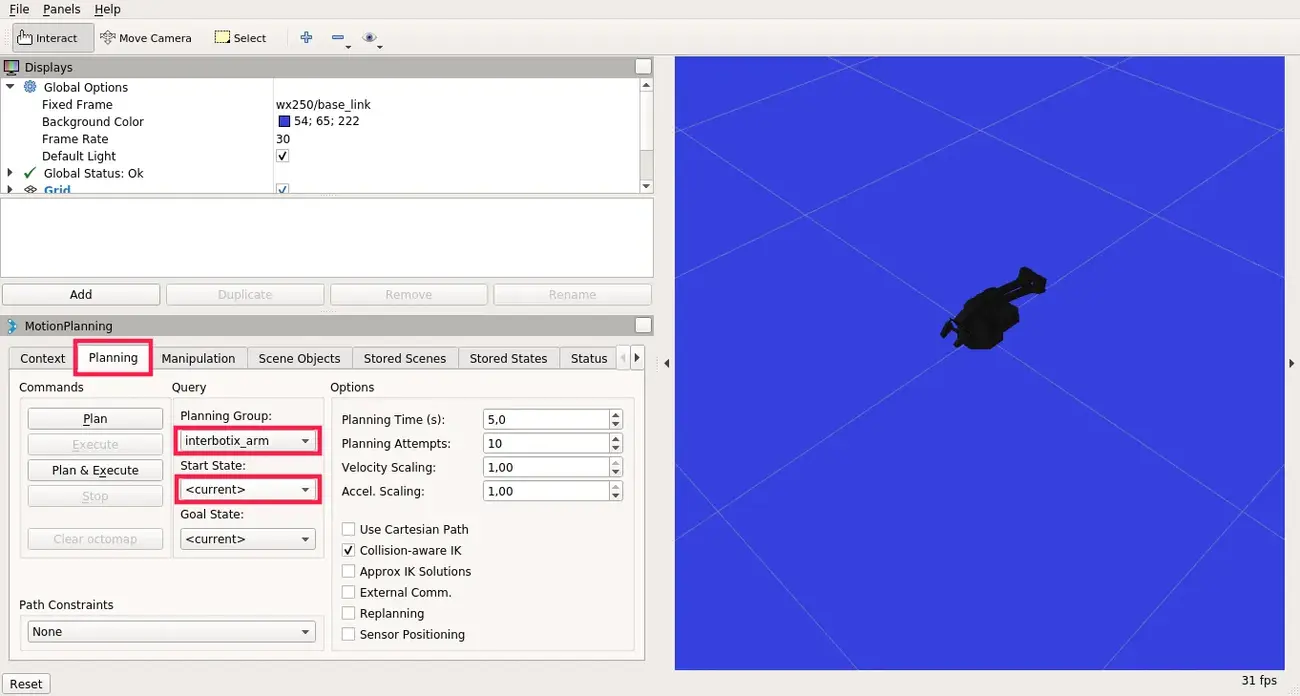

To command MoveIt, you can launch rviz from your computer (not the rover):

ROS_NAMESPACE=wx250s roslaunch interbotix_xsarm_moveit moveit_rviz.launch config:=true rviz_frame:=wx250s/base_link

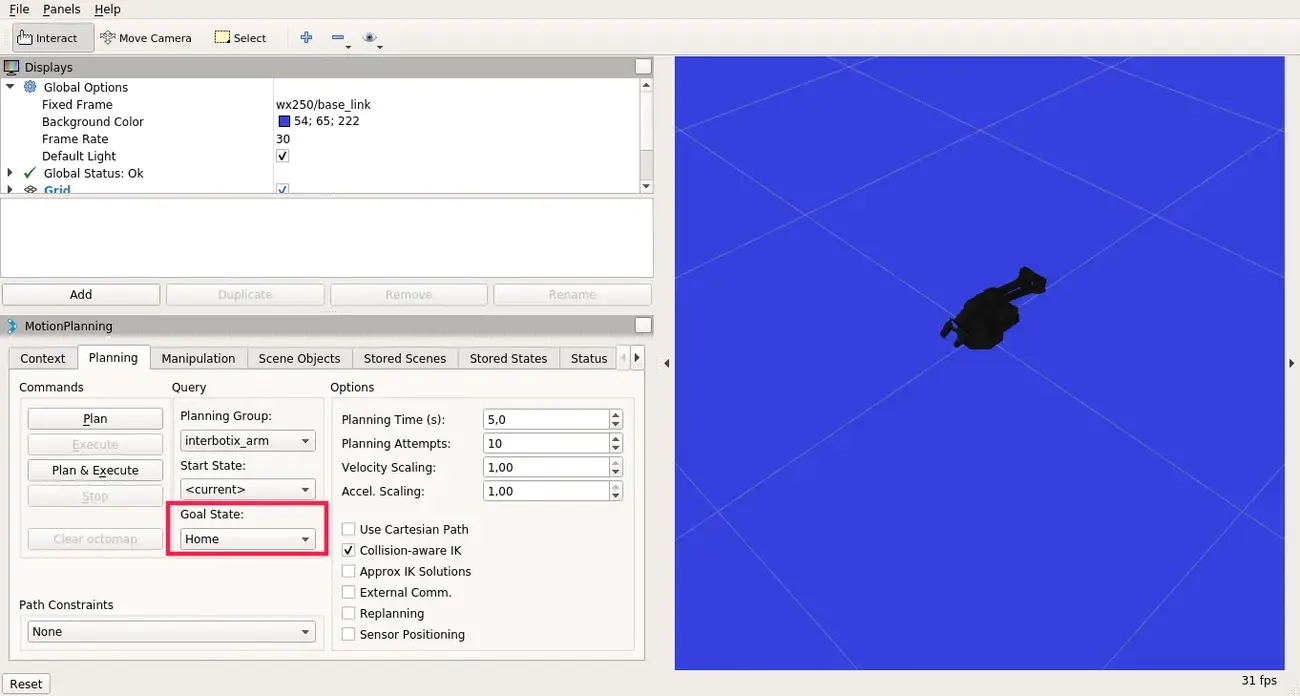

The MoveIt GUI should appear:

On the MotionPlanning panel, click on the Planning tab, choose

interbotix_arm for the Planning Group and <current> for the Start

State (to operate with the gripper, change the Planning Group to

interbotix_gripper)

There are some predefined poses which you can choose for the Goal State,

such as home, sleep or upright.

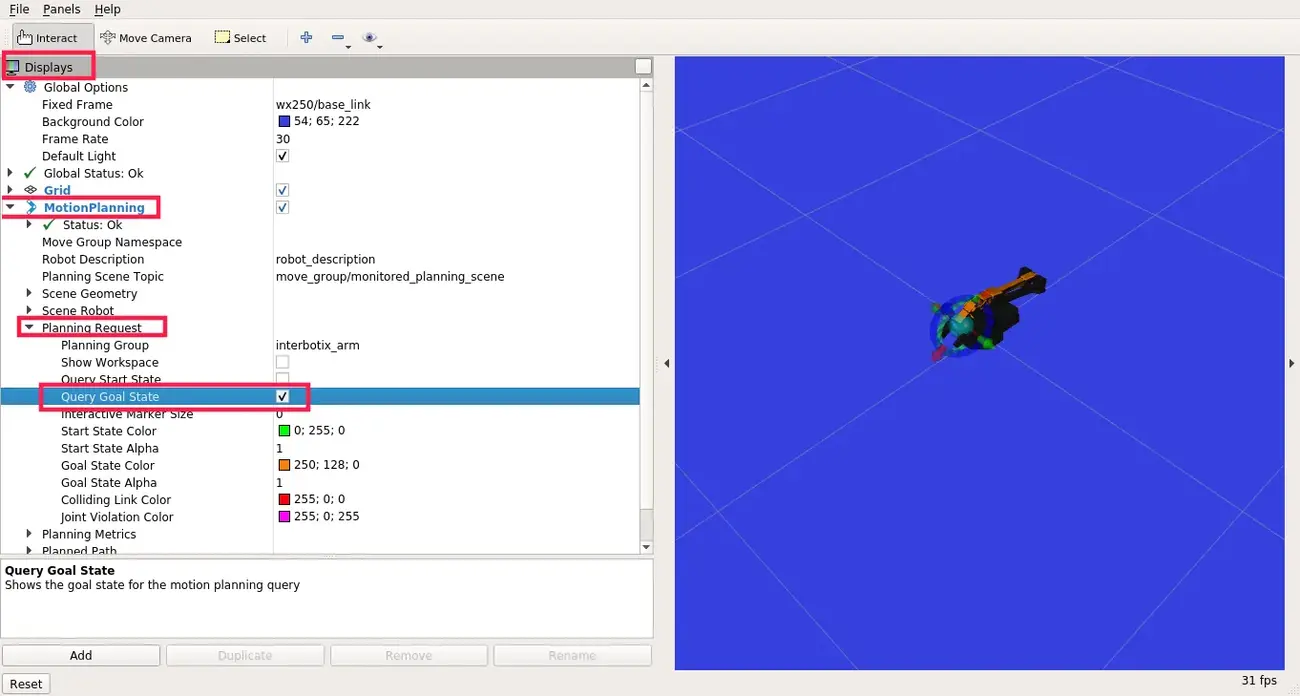

To set the pose manually, navigate to the Displays panel ->

MotionPlanning -> Planning Request and check Query Goal State. You

should now be able to manually set the end-effector pose for the goal state.

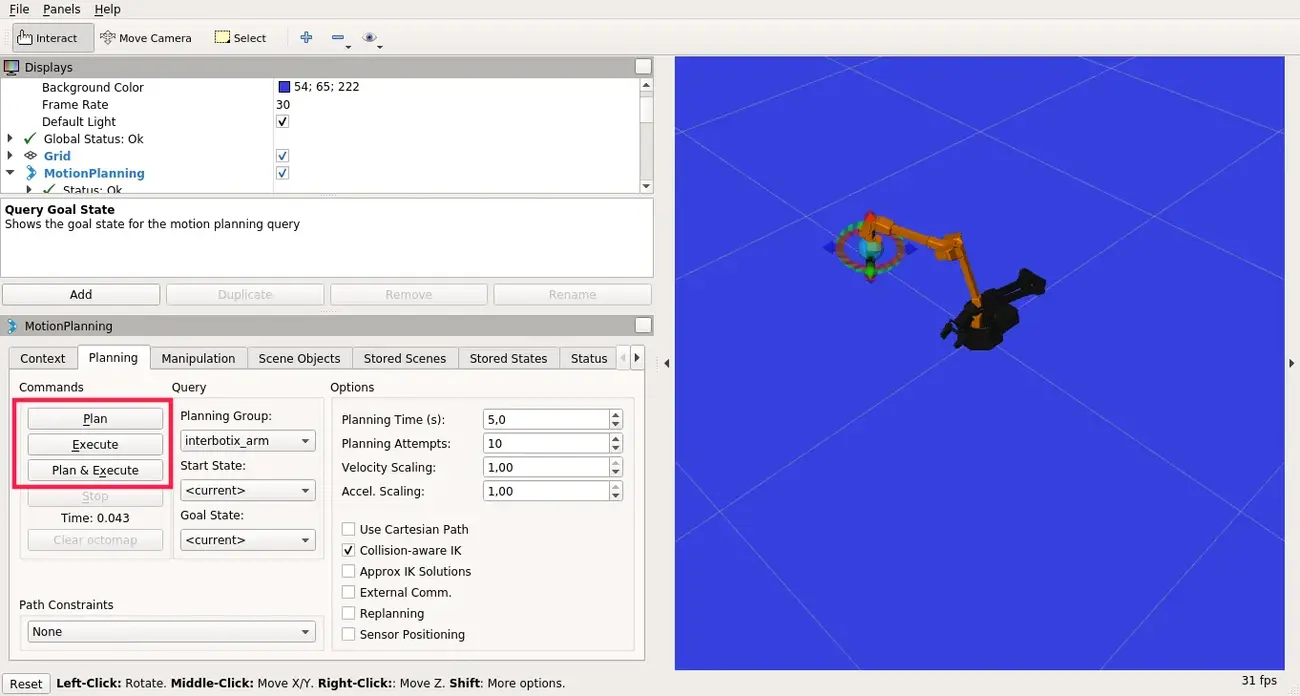

When the goal state is set, click on the Plan button to plan the trajectory (the simulated trajectory visualization should appear) and Execute to send the trajectory to the driver.

If you want to use the MoveIt capabilities in a Python script or a C++ program, please look at the interbotix_moveit_interface example.

What next?

If you found this tutorial interesting, make sure to check out other tutorials we provide on our Integrations site.